|

mlpack

master

|

|

mlpack

master

|

Go to the source code of this file.

Classes | |

| class | mlpack::optimization::Adam< DecomposableFunctionType > |

| Adam is an optimizer that computes individual adaptive learning rates for different parameters from estimates of first and second moments of the gradients. More... | |

Namespaces | |

| mlpack | |

| Linear algebra utility functions, generally performed on matrices or vectors. | |

| mlpack::optimization | |

Adam optimizer. Adam is an an algorithm for first-order gradient-based optimization of stochastic objective functions, based on adaptive estimates of lower-order moments.

mlpack is free software; you may redistribute it and/or modify it under the terms of the 3-clause BSD license. You should have received a copy of the 3-clause BSD license along with mlpack. If not, see http://www.opensource.org/licenses/BSD-3-Clause for more information.

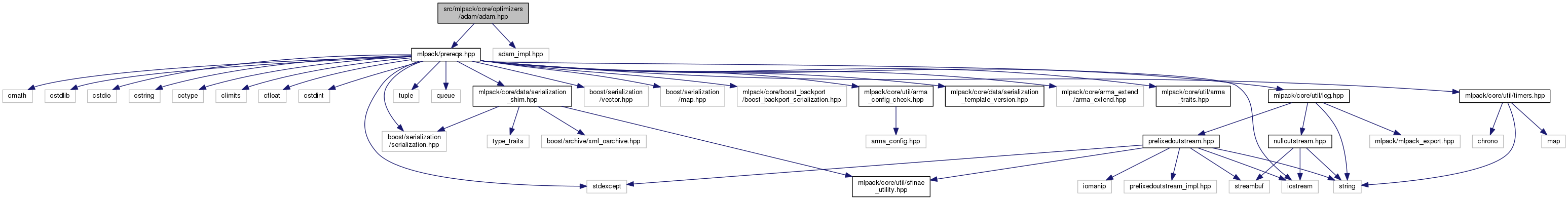

Definition in file adam.hpp.

1.8.11

1.8.11